Client Background

Client is a multinational metals and mining corporation, producing iron ore, copper, diamonds, gold and uranium.

Context and Business Objective

Client employs operators on different locations to mine the diamonds. These operators are responsible to run cutting machines with different parameters such as wheel speed, rotation rate, down speed etc and execute diamond mining.

Depending on the parameters set by the operator, size of the extracted rock-chips varies. So, the client wanted to build a solution that informs the cutter operator what optimal cutter settings should produce the largest chip size.

Current Approach

Client heuristically identifies best tunable parameters to get better output. But, this approach has couple of drawbacks:

- It doesn’t quantify the contribution of individual parameter to the output

- This approach does not account for the interaction between two parameters, as all heuristic are dined in one dimension or max two

Proposed Approach and Solution

Valiance proposed to build a Mathematical relationship between tunable parameters and the output (Good/Bad). Advantages of this approach are given below:

- We can approximate quality of the output for any given input parameters without testing it in the field

- This approach quantifies the contribution of individual parameters and accounts for the interaction of tunable parameters

- Once the relationship is developed between tunable parameters and the output, we can move algorithmically within the search space to find globally optimal tunable parameters.

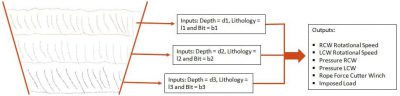

As trench digging goes deeper inside the earth, soil level and lithology of the rock changes. So, our Machine Learning engine optimized the tunable parameters for a given depth, lithology and bit.

Modeling Pipeline

- We developed a classification model by regressing the quality of the chip size on above mentioned independent variables

- Trained the model on 80% of the samples and validated the model on 20% of the samples

- Assigned 0 to good and 1 to bad because in the optimization we used standard minimization problem

We validated four parametric classifiers: Parametric Classifiers: Logistic Regression; Linear Discriminant Analysis (LDA); Perception and Naïve Bayes and one tree based classifier: Tree Based Classifier: Gradient Boost Machines

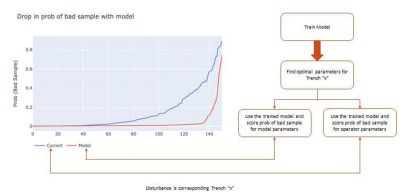

Optimization Model Performance and ROI

- Our optimization exercise was able to convert ~60% of the bad sample into good sample.

- We observed that the tree-based model outperforms all other models with respect to variation and bias both. Also, the model showed an accuracy of 78%.

- The conversion rate was improved by ~15%.