A deepfake scam doesn’t start with a glitch, it starts with trust.

In February 2024, a finance employee at a multinational firm in Hong Kong authorized $25 million across 15 transfers after attending what appeared to be a legitimate video call with senior executives. Every participant on the call except the employee was a deepfake.(https://www.weforum.org/stories/2025/01/deepfakes-different-threat-than-expected/)

By 2026, deepfake phishing will spread across industries. Fraud attempts surged by 3,000% in 2023, deepfake incidents increased 10x year-over-year, and files are projected to grow from 500,000 in 2023 to 8 million in 2025, a 900% annual surge.(https://keepnetlabs.com/blog/deepfake-statistics-and-trends)

In India, during the 2024 general elections, deepfake attempts surged 280% year-on-year, and over 75% of Indians reported exposure to synthetic political content, including manipulated videos and cloned voices.(https://www.aicerts.ai/news/how-political-misinformation-deepfakes-threaten-2026-elections/)

Deepfakes are no longer experimental tools. They are widely accessible, fast to produce, and capable of spreading across platforms within minutes. The challenge today is not just identifying fake content but verifying authenticity in real time before damage is done.

The Deepfake Surge: A Rapidly Expanding Attack Surface

Deepfake threats are accelerating across industries:

- Deepfake fraud attempts increased 31x in 2023

- Incidents rose 257% in 2024

- 179 cases were recorded in Q1 2025 alone

- Voice deepfakes surged 680% year-over-year

- Deepfakes now represent 6.5% of all fraud attempts

- Financial losses from generative AI are projected to reach $40 billion by 2027

Financial systems, crypto platforms, enterprises, and public institutions are increasingly targeted.

But the bigger issue is structural: Most video systems still store footage without interpreting it. (https://keepnetlabs.com/blog/deepfake-statistics-and-trends)

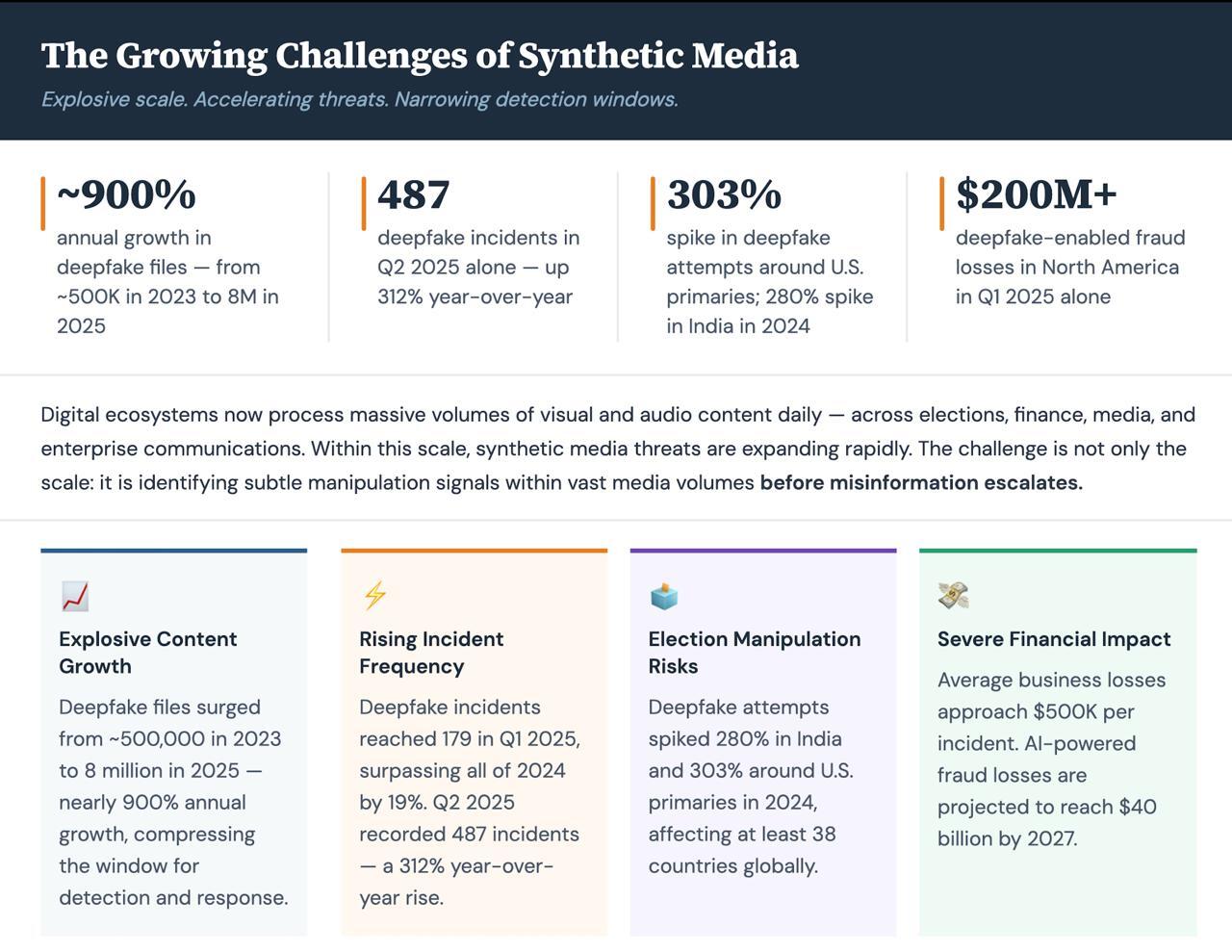

The Growing Challenges of Synthetic Media

Digital ecosystems now process massive volumes of visual and audio content daily across elections, finance, media, and enterprise communications. Within this scale, synthetic media threats are expanding rapidly.

The challenge is not only the scale, it is identifying subtle manipulation signals within vast media volumes before misinformation escalates.

Limitations of Traditional Approaches

Traditional detection methods rely heavily on manual checks and visible inconsistencies. In high-volume environments, these approaches face structural gaps:

- Fragmented visibility: Activity across platforms, apps, and media channels is rarely connected in real time.

- Late detection: Sophisticated deepfakes often surface only after gaining traction.

- Artifact-based identification: Systems depend on visible flaws like unnatural blinking or lighting now easily corrected.

- Manual moderation gaps: Human review cannot scale with millions of uploads per hour.

- Limited contextual analysis: Correlating visual, audio, and semantic inconsistencies remains slow and complex.

Traditional systems help react. They do not reliably prevent.

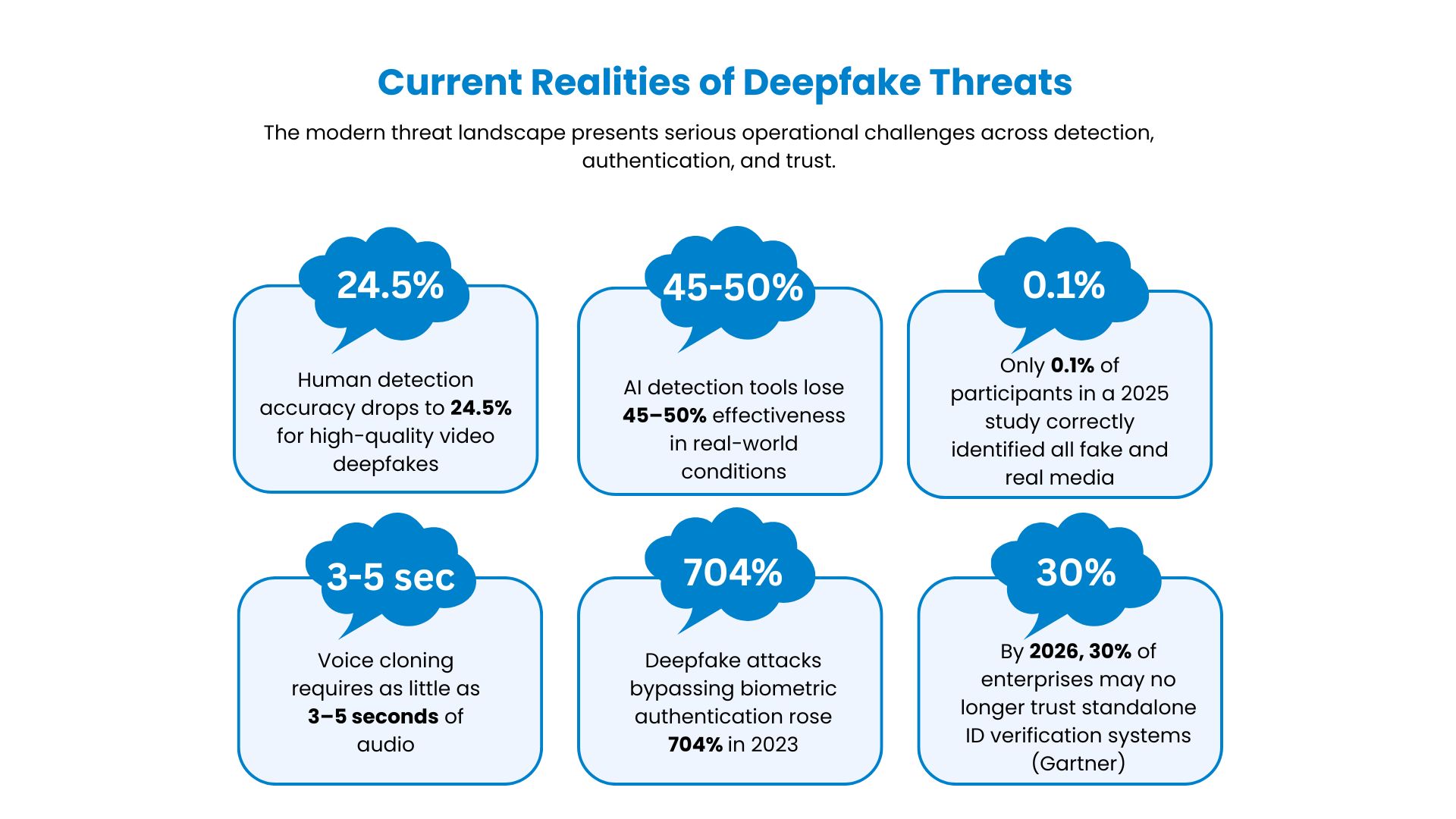

Current Realities of Deepfake Threats

The modern threat landscape presents operational challenges:

Even advanced AI detection tools lose 45–50% effectiveness in real-world conditions.

The gap isn’t camera coverage: It’s intelligence.

(https://keepnetlabs.com/blog/deepfake-statistics-and-trends)

AI-Powered Deepfake Detection and Filtering

Modern AI systems move beyond visual inspection by analyzing multiple signals simultaneously.

- Multimodal Analysis: Combines visual, audio, and contextual signals instead of relying on one detection layer.

- Temporal Pattern Recognition: Detects inconsistencies across frame sequences, not just static images.

- Spectral Audio Examination: Identifies frequency distortions common in cloned voices.

- Semantic Alignment Checks: Validates whether speech content aligns logically with verified contextual data.

- Ensemble Architectures: Integrates spatial, temporal, and behavioral models for stronger adversarial resilience.

- Real-Time Filtering Pipelines: Lightweight first-pass screening filters high-risk content instantly, followed by deeper analysis when required.

This approach shifts detection from surface-level inspection to intelligent pattern interpretation at scale.

Why It Matters

Deepfakes now target financial approvals, political communication, executive impersonation, biometric authentication, and public platforms. 77% of victims targeted by voice cloning report financial loss. Over 50% of enterprises have faced AI-driven fraud attempts. When synthetic content becomes indistinguishable from reality, traditional verification collapses. Real-time interpretation becomes the only scalable defense.

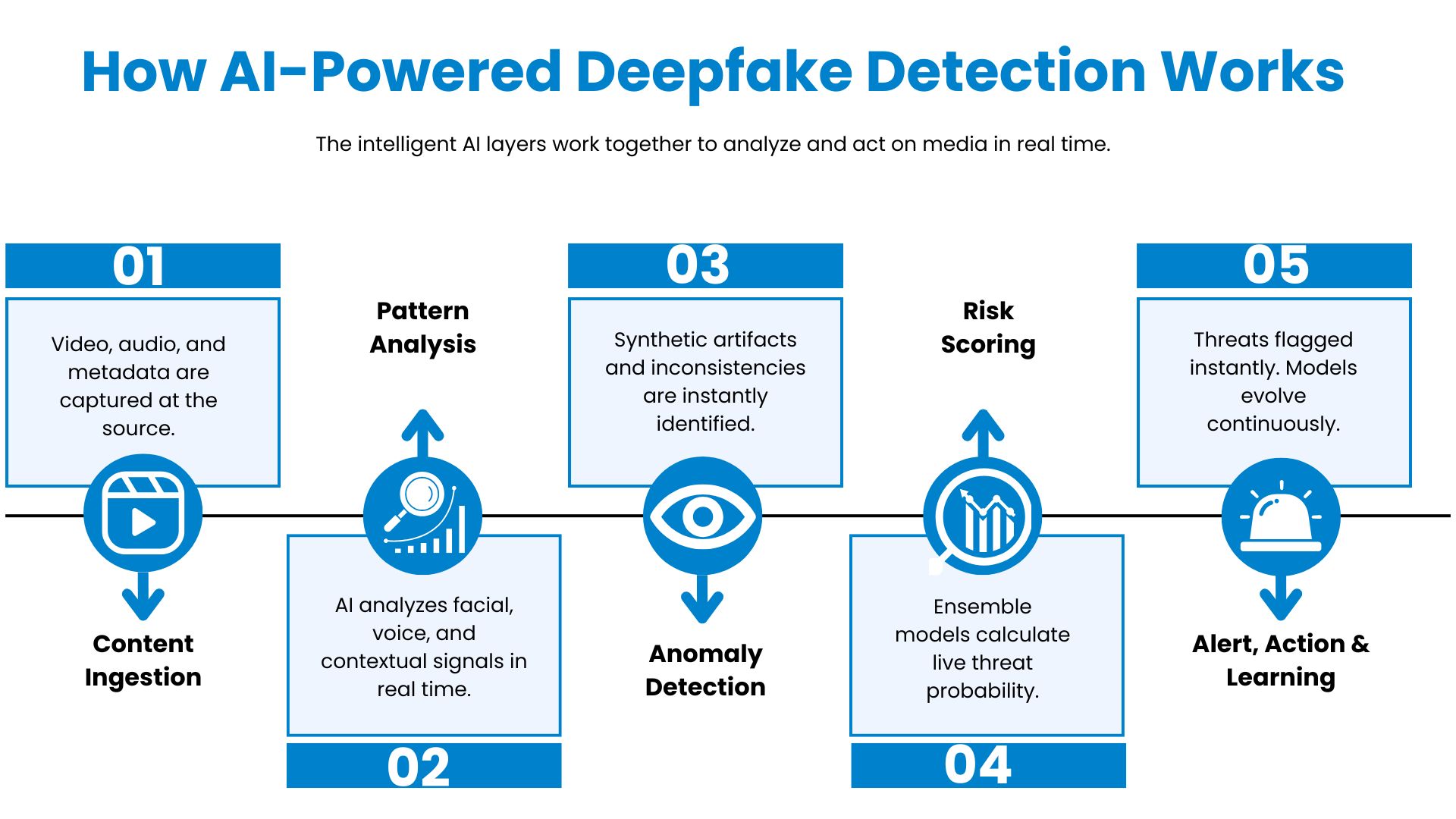

How AI-Powered Deepfake Detection Works

In a typical deployment, intelligent AI layers work together to analyze and act on media in real time.

Result: Observe. Analyze. Filter Early.

Where AI Filtering Matters Most

- Election-related and political communications

- Financial transactions and biometric authentication

- News and citizen journalism

- Social media and messaging streams

- Corporate and executive communications

- Creative and user-generated media

In these environments, even seconds of undetected synthetic content can create outsized impact.

The Future of deepfake defence

Deepfake defense is not just about detection accuracy.

It is about:

- Continuous monitoring

- Predictive anomaly identification

- Automated decision support

Surveillance must evolve from visibility to foresight.

Valiance Solutions is making surveillance intelligent and solving one of the world’s biggest blind spots turning systems into intelligent, predictive defense infrastructure.